CUDA implementation of the Layer Normalization operation for neural networks. More...

Public Types | |

| using | MR = typename CudaDevice::MR |

| using | UnaryOperationBase = UnaryOperation< DeviceType::Cuda, TInput, TOutput > |

Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| using | MR = std::conditional_t< TDeviceType==DeviceType::Cuda, CudaMemoryResource, HostMemoryResource > |

| Memory resource type based on device type. | |

Public Member Functions | |

| CudaLayerNormOp (const LayerNormConfig &config) | |

| Constructs a new CUDA Layer Normalization operation with the default device context. | |

| CudaLayerNormOp (std::shared_ptr< DeviceContext > context, const LayerNormConfig &config) | |

| Constructs a new CUDA Layer Normalization operation with a specific device context. | |

| void | backward (const Tensor< TInput, MR > &input, const Tensor< TOutput, MR > &output, const Tensor< TOutput, MR > &output_gradient, const std::vector< std::shared_ptr< Tensor< TInput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TInput, MR > > > ¶meter_gradients, Tensor< TInput, MR > &input_gradient, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const |

| Performs the backward pass of the Layer Normalization operation. | |

| void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const override |

| Performs the forward pass of the Layer Normalization operation on CUDA. | |

| std::string | getName () const override |

| Gets the name of this operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| UnaryOperation (OperationType operation_type) | |

| Constructs a UnaryOperation with the specified operation type. | |

| UnaryOperation (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs a UnaryOperation with the specified operation type and device context. | |

| virtual | ~UnaryOperation ()=default |

| Virtual destructor for proper cleanup of derived classes. | |

| virtual void | backward (const Tensor< TInput, MR > &grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_grads) const |

| Executes the backward pass of a unary operation. | |

| virtual void | backward (const Tensor< TInput, MR > &input, const Tensor< TOutput, MR > &output_grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meter_grads, Tensor< TInput, MR > &input_grad, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const |

| Executes the comprehensive backward pass of a unary operation. | |

| virtual void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const =0 |

| Executes the forward pass of a unary operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > | |

| OperationBase (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs an OperationBase object with a specific device context and compute precision. | |

| virtual | ~OperationBase ()=default |

| Virtual destructor for the OperationBase class. | |

| std::shared_ptr< DeviceContext > | getDeviceContext () const |

| Gets the device context associated with this operation. | |

| DeviceType | getDeviceType () const |

| Gets the device type for this operation. | |

| OperationType | getOperationType () const |

| Gets the operation type enumeration value. | |

Private Attributes | |

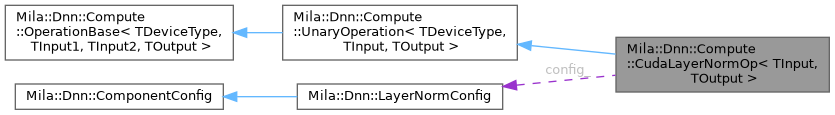

| LayerNormConfig | config_ |

| Configuration for the layer normalization operation. | |

Detailed Description

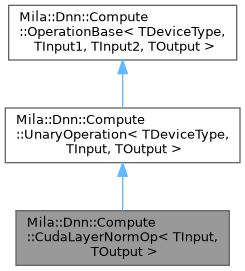

class Mila::Dnn::Compute::CudaLayerNormOp< TInput, TOutput >

CUDA implementation of the Layer Normalization operation for neural networks.

This class provides a CUDA-based implementation of the Layer Normalization operation, which normalizes the activations of a layer for each example in a batch, usually applied before the activation function. Layer normalization helps stabilize the learning process and reduce the training time required to learn the parameters of neural networks.

The normalization is applied across the last dimension (feature dimension) and includes learnable scale (gamma) and shift (beta) parameters. The implementation is optimized for NVIDIA GPUs using CUDA for high-performance computation.

- Template Parameters

-

TPrecision The data type of the input tensor elements. TDataType The data type of the output tensor elements (defaults to the input type).

Member Typedef Documentation

◆ MR

| using Mila::Dnn::Compute::CudaLayerNormOp< TInput, TOutput >::MR = typename CudaDevice::MR |

◆ UnaryOperationBase

| using Mila::Dnn::Compute::CudaLayerNormOp< TInput, TOutput >::UnaryOperationBase = UnaryOperation<DeviceType::Cuda, TInput, TOutput> |

Constructor & Destructor Documentation

◆ CudaLayerNormOp() [1/2]

|

inline |

Constructs a new CUDA Layer Normalization operation with the default device context.

Initializes the operation with a CUDA device context (defaults to CUDA:0).

◆ CudaLayerNormOp() [2/2]

|

inline |

Constructs a new CUDA Layer Normalization operation with a specific device context.

- Parameters

-

context The device context to use for this operation.

- Exceptions

-

std::runtime_error If the context is not for a CUDA device.

Member Function Documentation

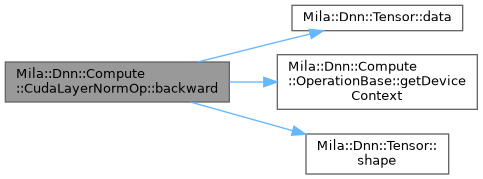

◆ backward()

|

inline |

Performs the backward pass of the Layer Normalization operation.

Computes gradients with respect to inputs, weights, and biases.

- Parameters

-

input Input tensor from the forward pass. output Output tensor from the forward pass. output_gradient Gradient of the loss with respect to the output. parameters Parameters tensor from forward pass [weight, bias]. parameter_gradients Gradients for parameters [d_weight, d_bias]. input_gradient Gradient of the loss with respect to the input. properties Additional attributes for the operation. output_state Cache tensors from forward pass [mean, rstd].

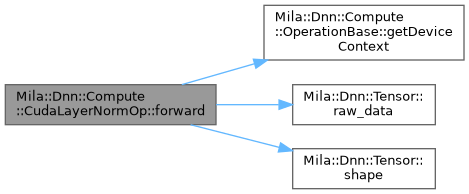

◆ forward()

|

inlineoverride |

Performs the forward pass of the Layer Normalization operation on CUDA.

Normalizes the input tensor across the feature dimension (last dimension) by:

- Computing the mean and standard deviation of each sample

- Normalizing the values using these statistics

- Applying learnable scale and shift parameters

The computation is performed on the GPU using CUDA kernels for optimal performance.

- Parameters

-

input Input tensor of shape [B, TDataType, C] to be normalized, where B is batch size, TDataType is sequence length, and C is feature dimension. parameters Vector of parameter tensors [weight, bias] where weight (gamma) and bias (beta) are both of shape [C]. properties Additional attributes for the operation. output Output tensor of shape [B, TDataType, C] containing the normalized values. output_state Vector containing tensors for intermediate results [mean, rstd], where mean is the mean values and rstd is the reciprocal of standard deviation.

◆ getName()

|

inlineoverridevirtual |

Gets the name of this operation.

- Returns

- std::string The name of the operation ("Cuda::LayerNormOp").

Implements Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput >.

Member Data Documentation

◆ config_

|

private |

Configuration for the layer normalization operation.

The documentation for this class was generated from the following file:

- /home/runner/work/Mila/Mila/Mila/Src/Dnn/Compute/Operations/Cuda/CudaLayerNormOp.ixx