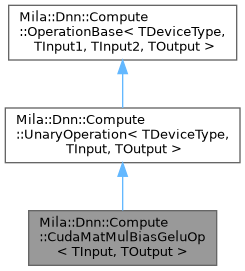

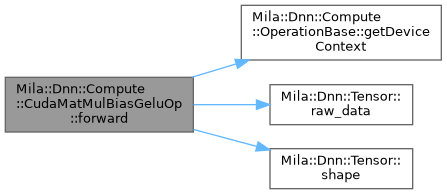

CUDA implementation of the fused MatMul-Bias-GELU operation. More...

Public Types | |

| using | MR = typename CudaDevice::MR |

| using | UnaryOperationBase = UnaryOperation< DeviceType::Cuda, TInput, TOutput > |

Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| using | MR = std::conditional_t< TDeviceType==DeviceType::Cuda, CudaMemoryResource, HostMemoryResource > |

| Memory resource type based on device type. | |

Public Member Functions | |

| CudaMatMulBiasGeluOp () | |

| Constructs a new CUDA MatMul-Bias-GELU fused operation with the default device context. | |

| CudaMatMulBiasGeluOp (std::shared_ptr< DeviceContext > context) | |

| Constructs a new CUDA MatMul-Bias-GELU fused operation with a specific device context. | |

| void | backward (const Tensor< TInput, MR > &input, const Tensor< TOutput, MR > &output, const Tensor< TOutput, MR > &output_gradient, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meter_gradients, Tensor< TInput, MR > &input_gradient, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const |

| Performs the backward pass of the fused MatMul-Bias-GELU operation. | |

| void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const override |

| Performs the forward pass of the fused MatMul-Bias-GELU operation on CUDA. | |

| std::string | getName () const override |

| Gets the name of this operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| UnaryOperation (OperationType operation_type) | |

| Constructs a UnaryOperation with the specified operation type. | |

| UnaryOperation (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs a UnaryOperation with the specified operation type and device context. | |

| virtual | ~UnaryOperation ()=default |

| Virtual destructor for proper cleanup of derived classes. | |

| virtual void | backward (const Tensor< TInput, MR > &grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_grads) const |

| Executes the backward pass of a unary operation. | |

| virtual void | backward (const Tensor< TInput, MR > &input, const Tensor< TOutput, MR > &output_grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meter_grads, Tensor< TInput, MR > &input_grad, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const |

| Executes the comprehensive backward pass of a unary operation. | |

| virtual void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const =0 |

| Executes the forward pass of a unary operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > | |

| OperationBase (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs an OperationBase object with a specific device context and compute precision. | |

| virtual | ~OperationBase ()=default |

| Virtual destructor for the OperationBase class. | |

| std::shared_ptr< DeviceContext > | getDeviceContext () const |

| Gets the device context associated with this operation. | |

| DeviceType | getDeviceType () const |

| Gets the device type for this operation. | |

| OperationType | getOperationType () const |

| Gets the operation type enumeration value. | |

Static Public Member Functions | |

| static const std::string & | className () |

| Gets the class name of this operation. | |

Detailed Description

requires ValidFloatTensorTypes<TInput, TOutput>

class Mila::Dnn::Compute::CudaMatMulBiasGeluOp< TInput, TOutput >

CUDA implementation of the fused MatMul-Bias-GELU operation.

This class provides a CUDA-based implementation of a fused operation that combines matrix multiplication, bias addition, and GELU activation in a single operation. Fusing these operations improves performance by reducing memory traffic and kernel launch overhead.

The implementation is optimized for NVIDIA GPUs using cuBLASLt for high-performance computation of the fused operation.

- Template Parameters

-

TPrecision The data type of the input tensor elements. TDataType The data type for computation and output (defaults to the input type).

Member Typedef Documentation

◆ MR

| using Mila::Dnn::Compute::CudaMatMulBiasGeluOp< TInput, TOutput >::MR = typename CudaDevice::MR |

◆ UnaryOperationBase

| using Mila::Dnn::Compute::CudaMatMulBiasGeluOp< TInput, TOutput >::UnaryOperationBase = UnaryOperation<DeviceType::Cuda, TInput, TOutput> |

Constructor & Destructor Documentation

◆ CudaMatMulBiasGeluOp() [1/2]

|

inline |

Constructs a new CUDA MatMul-Bias-GELU fused operation with the default device context.

Initializes the operation with a CUDA device context (defaults to CUDA:0).

◆ CudaMatMulBiasGeluOp() [2/2]

|

inline |

Constructs a new CUDA MatMul-Bias-GELU fused operation with a specific device context.

- Parameters

-

context The device context to use for this operation.

- Exceptions

-

std::runtime_error If the context is not for a CUDA device.

Member Function Documentation

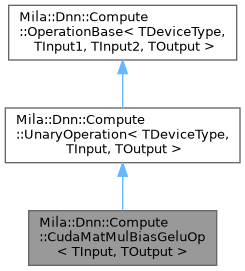

◆ backward()

|

inline |

Performs the backward pass of the fused MatMul-Bias-GELU operation.

Computes gradients with respect to inputs, weights, and biases.

- Parameters

-

input Input tensor from the forward pass. output Output tensor from the forward pass. output_gradient Gradient of the loss with respect to the output. parameters Parameters tensor from forward pass [weights, bias]. parameter_gradients Gradients for parameters [d_weights, d_bias]. input_gradient Gradient of the loss with respect to the input. properties Additional attributes for the operation. output_state Cache tensors from forward pass.

◆ className()

|

inlinestatic |

Gets the class name of this operation.

- Returns

- const std::string& The class name of the operation.

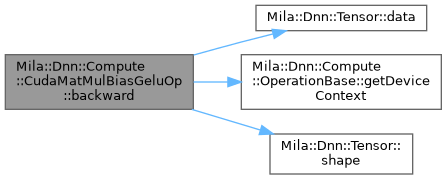

◆ forward()

|

inlineoverride |

Performs the forward pass of the fused MatMul-Bias-GELU operation on CUDA.

This method efficiently computes a matrix multiplication followed by a bias addition and GELU activation in a single fused operation. The implementation uses cuBLASLt for optimal performance on NVIDIA GPUs.

- Parameters

-

input Input tensor of shape [B, S, K], where B is batch size, S is sequence length, and K is the input dimension. parameters Vector of parameter tensors where: - parameters[0]: Weights tensor of shape [K, N]

- parameters[1]: Bias tensor of shape [N]

properties Additional attributes for the operation. output Output tensor of shape [B, S, N] to store the result. output_state Cache for intermediate results (not used in this operation).

◆ getName()

|

inlineoverridevirtual |

Gets the name of this operation.

- Returns

- std::string The name of the operation ("Cuda::MatMulBiasGeluOp").

Implements Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput >.

The documentation for this class was generated from the following file:

- /home/runner/work/Mila/Mila/Mila/Src/Dnn/Compute/Operations/Cuda/MatMulBiasActivation.ixx