CUDA implementation of the softmax operation for neural networks. More...

Public Types | |

| using | MR = typename CudaDevice::MR |

| using | UnaryOperationBase = UnaryOperation< DeviceType::Cuda, TInput, TOutput > |

Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Types inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| using | MR = std::conditional_t< TDeviceType==DeviceType::Cuda, CudaMemoryResource, HostMemoryResource > |

| Memory resource type based on device type. | |

Public Member Functions | |

| CudaSoftmaxOp (const SoftmaxConfig &config) | |

| Constructs a new CUDA Softmax operation with the default device context. | |

| CudaSoftmaxOp (std::shared_ptr< DeviceContext > context, const SoftmaxConfig &config) | |

| Constructs a new CUDA Softmax operation with a specific device context. | |

| void | backward (const Tensor< TInput, MR > &input, const Tensor< TInput, MR > &output, const Tensor< TInput, MR > &output_gradient, const std::vector< std::shared_ptr< Tensor< TInput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TInput, MR > > > ¶meter_gradients, Tensor< TInput, MR > &input_gradient, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TInput, MR > > > &output_state) const |

| Performs the backward pass of the softmax operation. | |

| void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TInput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const override |

| Performs the forward pass of the softmax operation on CUDA. | |

| std::string | getName () const override |

| Gets the name of this operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::UnaryOperation< TDeviceType, TInput, TOutput > | |

| UnaryOperation (OperationType operation_type) | |

| Constructs a UnaryOperation with the specified operation type. | |

| UnaryOperation (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs a UnaryOperation with the specified operation type and device context. | |

| virtual | ~UnaryOperation ()=default |

| Virtual destructor for proper cleanup of derived classes. | |

| virtual void | backward (const Tensor< TInput, MR > &grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_grads) const |

| Executes the backward pass of a unary operation. | |

| virtual void | backward (const Tensor< TInput, MR > &input, const Tensor< TOutput, MR > &output_grad, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meter_grads, Tensor< TInput, MR > &input_grad, const OperationAttributes &properties, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const |

| Executes the comprehensive backward pass of a unary operation. | |

| virtual void | forward (const Tensor< TInput, MR > &input, const std::vector< std::shared_ptr< Tensor< TOutput, MR > > > ¶meters, const OperationAttributes &properties, Tensor< TOutput, MR > &output, std::vector< std::shared_ptr< Tensor< TOutput, MR > > > &output_state) const =0 |

| Executes the forward pass of a unary operation. | |

Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > Public Member Functions inherited from Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput > | |

| OperationBase (OperationType operation_type, std::shared_ptr< DeviceContext > context) | |

| Constructs an OperationBase object with a specific device context and compute precision. | |

| virtual | ~OperationBase ()=default |

| Virtual destructor for the OperationBase class. | |

| std::shared_ptr< DeviceContext > | getDeviceContext () const |

| Gets the device context associated with this operation. | |

| DeviceType | getDeviceType () const |

| Gets the device type for this operation. | |

| OperationType | getOperationType () const |

| Gets the operation type enumeration value. | |

Private Attributes | |

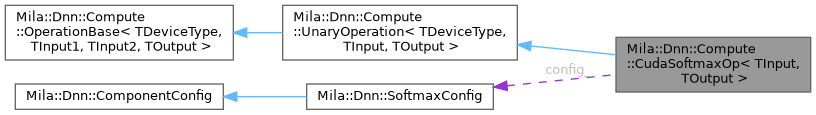

| SoftmaxConfig | config_ |

| Configuration for the softmax operation, including axis and other parameters. | |

Detailed Description

requires ValidFloatTensorTypes<TInput, TOutput>

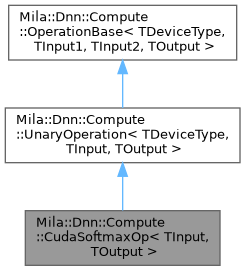

class Mila::Dnn::Compute::CudaSoftmaxOp< TInput, TOutput >

CUDA implementation of the softmax operation for neural networks.

This class provides a CUDA-based implementation of the softmax operation, which converts a vector of real numbers into a probability distribution. The softmax function is commonly used in classification tasks as the final activation function of a neural network, and in attention mechanisms within transformer architectures.

The implementation is optimized for NVIDIA GPUs using CUDA for high-performance computation, especially for large vocabulary sizes typical in language models.

- Template Parameters

-

TInput The data type of the input tensor elements. TDataType The data type of the output tensor elements (defaults to the input type).

Member Typedef Documentation

◆ MR

| using Mila::Dnn::Compute::CudaSoftmaxOp< TInput, TOutput >::MR = typename CudaDevice::MR |

◆ UnaryOperationBase

| using Mila::Dnn::Compute::CudaSoftmaxOp< TInput, TOutput >::UnaryOperationBase = UnaryOperation<DeviceType::Cuda, TInput, TOutput> |

Constructor & Destructor Documentation

◆ CudaSoftmaxOp() [1/2]

|

inline |

Constructs a new CUDA Softmax operation with the default device context.

Initializes the operation with a CUDA device context (defaults to CUDA:0).

◆ CudaSoftmaxOp() [2/2]

|

inline |

Constructs a new CUDA Softmax operation with a specific device context.

- Parameters

-

context The device context to use for this operation.

- Exceptions

-

std::runtime_error If the context is not for a CUDA device.

Member Function Documentation

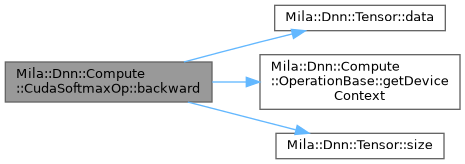

◆ backward()

|

inline |

Performs the backward pass of the softmax operation.

Computes gradients with respect to inputs based on the output gradient. For softmax: dL/dx_i = ?_j (dL/dy_j * (y_i * (?_ij - y_j))) where ?_ij is the Kronecker delta.

- Parameters

-

input Input tensor from the forward pass. output Output tensor from the forward pass (softmax probabilities). output_gradient Gradient of the loss with respect to the output. parameters Parameters tensor from forward pass (not used). parameter_gradients Gradients for parameters (not used). input_gradient Gradient of the loss with respect to the input. properties Additional attributes for the operation. output_state Cache tensors from forward pass.

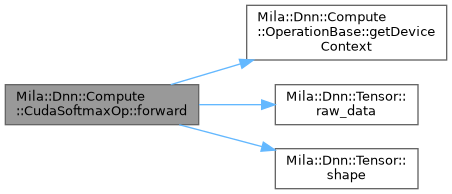

◆ forward()

|

inlineoverride |

Performs the forward pass of the softmax operation on CUDA.

Converts input logits into a probability distribution by taking the exponential of each element and normalizing by their sum. The computation is performed on the GPU using CUDA kernels for optimal performance.

The implementation includes numerical stability improvements by subtracting the maximum value before applying the exponential function.

- Parameters

-

input Input tensor containing logits of shape [B, TDataType, V], where B is batch size, TDataType is sequence length, and V is vocabulary size. parameters Additional parameters (not used in this operation). properties Additional attributes for the operation. output Output tensor of shape [B, TDataType, V] to store the resulting probability distribution. output_state Cache for intermediate results (not used in this operation).

◆ getName()

|

inlineoverridevirtual |

Gets the name of this operation.

- Returns

- std::string The name of the operation ("Cuda::SoftmaxOp").

Implements Mila::Dnn::Compute::OperationBase< TDeviceType, TInput1, TInput2, TOutput >.

Member Data Documentation

◆ config_

|

private |

Configuration for the softmax operation, including axis and other parameters.

The documentation for this class was generated from the following file:

- /home/runner/work/Mila/Mila/Mila/Src/Dnn/Compute/Operations/Cuda/CudaSoftmaxOp.ixx